Elon Musk is planning to start a colony on Mars. Jason Torchinsky proposed some improvements to Musk’s proposed spaceship design, but some commenters on social media questioned Torchinsky’s proposals. I’ve reproduced these comments below, so that I can link to them more easily.

Amateur rocket engineer Evan Daniel writes:

1) I’m not sure how luxurious the actual craft will be. It should clearly be more luxurious than Apollo, to keep the passengers sane. But it being more spartan than Elon was talking about, especially early, seems likely.

2) Elon clearly likes the simplicity of only one upper stage hull design. The cargo, passenger, and fuel versions share a hull. This makes a great deal of sense for version one to me. Adding a second, third, or fourth major ship type is for later.

2a) If the author is keen on their hab module thing, they might as well go all the way to a cycler, which plenty of other people have talked about. I’m confused by them not mentioning this along with the L1 garage idea, given that it should further save propellant.

3) That means you don’t actually want to shrink the passenger ship. Sure you could build everything, first stage and fuel stages included, to a smaller scale… but the large scale is part of why it will be cheap per passenger or per pound. So “more spartan” translates as “more passengers” or “more cargo on board”, not “smaller ship”.

3a) That combined with number 2 might mean that open space is weirdly cheaper than you’d think. I’d have to investigate in more detail (aka break out the spreadsheets) to be sure. I’m not certain on this. (Mass for stuff is definitely still as pricey as you think, but the passenger version might have “too much volume” because the fuel-carrying version needs it for tanks and they share a hull.)

4) On-orbit transfer of people is complicated. Propellant transfer is far less so. The ITS as proposed is actually a very conservative design in some ways; these changes are less so. In particular they cost development money in an attempt to save operating costs, while making operations more complex. This seems misguided, given that SpaceX is probably short on funds for development (relatively speaking). Before you call for making operations more complex, think hard about the F9H schedule slips (while noting that F9H should be cheaper per pound launched than F9).

Anyway, I definitely don’t have enough info to say who is right. But I definitely know enough to think these proposed changes are not obviously a good thing. Especially the parts that advocate for more complex development and operations in an attempt to reduce operating costs. I’ll put my money on the SpaceX crew mostly knowing what they’re talking about in this case. (I’d also put money on the ITS having meaningful changes from this before its first passenger-carrying Mars flight, but I suspect they won’t be the ones listed here.)

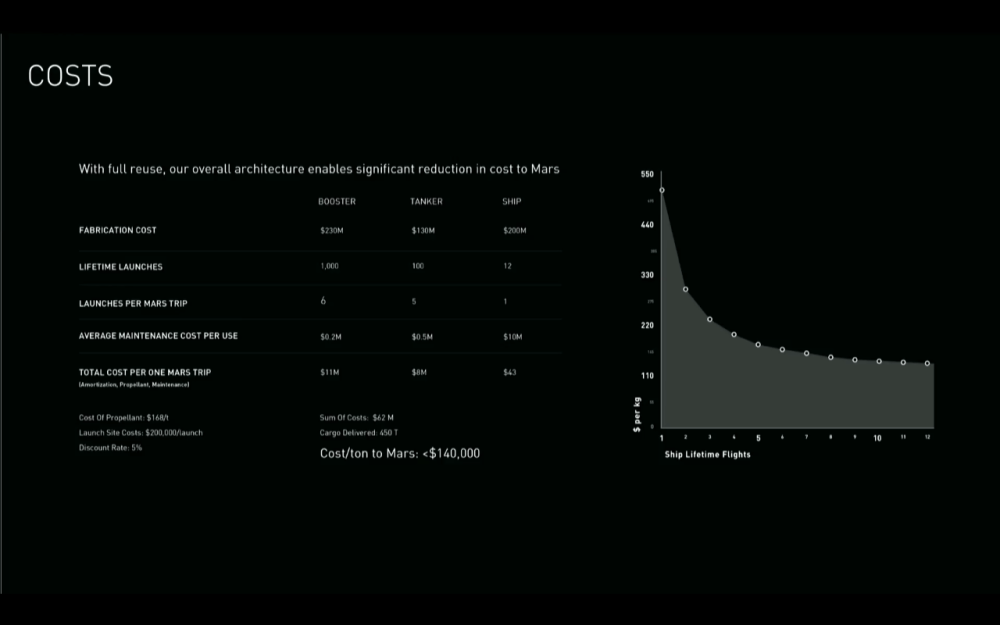

Or, more simply: the author didn’t pay enough attention to the most relevant slide of all:

The dominant cost of the flight to Mars is the ship that goes to Mars. Not the stage to launch it, not the other launches to fuel it. The dominant cost of that ship is the development and construction cost. If your “cost saving” measures are saving elsewhere by making that budget item bigger, you’re probably doing it wrong.

James Tillman writes:

Well, one difficulty with the proposed “space only” module is that you cannot aerobrake with it. The proposed Muskian solution is to aerobrake as you’re entering the Mars atmosphere, and decrease overall fuel requirements thus for going from Earth to Mars; you can also aerobrake when entering Earth’s atmosphere, and decrease fuel requirements for going from Mars to Earth. So that’s a big cost. This solution will require fuel for braking while going Earth to Mars, and Mars to Earth, for both the hab and the lander.

Someone raises this point in the comments, and [Torchinsky] says, “throw an aerobraking shell on it,” but I’m not sure it’s that simple. An inflatable structure designed for zero g–which is the whole attraction of the concept–wouldn’t work well aerobraking, I’d guess.

The second difficulty is re fuel requirements. Decreasing aerobraking means that you’ll need more fuel for the Mars to Earth rendevous, and that makes me wonder if the smaller ship will have difficulty getting enough fuel through in-situ methane generation to both launch from Mars, push the larger structure to Earth, then brake the larger structure and itself so they’re in orbit. So you’re dealing with potentially (absolutely) increased fuel requirements, unless you can cut the mass by enough, while you’re also decreasing the amount of fuel you bring from the Martian surface.

Oh, re the concern with difficulty landing the ship on Mars–Mars dust storms are extremely weak (least accurate part of The Martian), compared to earth storms, so that would be no problem.

Suggestion re launching the tanker before the crewed ship–this has been raised, I think Musk has said they might do this, unsure of advantages and disadvantages.

Of course I’d need to do the math to really know anything about this.

And mathematician Jonathan Lee adds:

The Soyuz is lighter than the Apollo capsules because the Orbital and instrument modules did not have to be recovered successfully; they burned up on Earth entry. It’s an approach that’s useful when you do not need everything.

The object of the ITS is to, well, get one’s ass to Mars. The galleys, toilets etc. (which are not actually the main mass drivers but let us not have mere facts get in the way) need to get down onto the surface, at least until the crew have built out a bunch of extra infrastructure. This needs to go there. Fuel on Mars is pretty explicitly not precious; the entire architecture is based around solar driving electrolysis and Sabatier reactions to make fuel. You actually want the lander to have enough delta-V to push the entire Mars – Earth return stack back to Earth. Scale is actually important here. They are ultimately not trying to get minimum cost flags-and-footprints. They are trying to build an architecture that reliably moves 200-500 tonnes of stuff to Mars. That is the point. To get enough mass there that you can build a self sustaining colony in a place colder, dryer and with less atmosphere than Antarctica. They need the mass moved.

Oh, winds. Ha. Yeah, so the atmosphere on Mars is about 1% the density of that of Earth. Wind forces go like the square of velocity, so you can convert Mars winds to Earth winds by dividing velocity by 10. The highest wind speeds that I can find reported for Mars are about 90mph (solid hurricane territory). So the dynamic pressures are about that of a 10mph wind on Earth. So, no, wind is not a problem.

The in-transit model being proposed cannot aerobrake, cannot even pretend to provide radiation protection, and means that now you need to have orbital assembly at both Mars and Earth on every launch. Oh, and have those docked connections take the not-inconsiderable forces of the injection burns each way.

I asked aerospace engineer Lloyd Strohl III to review this post, and he affirmed that Daniel and Lee’s comments here look correct.